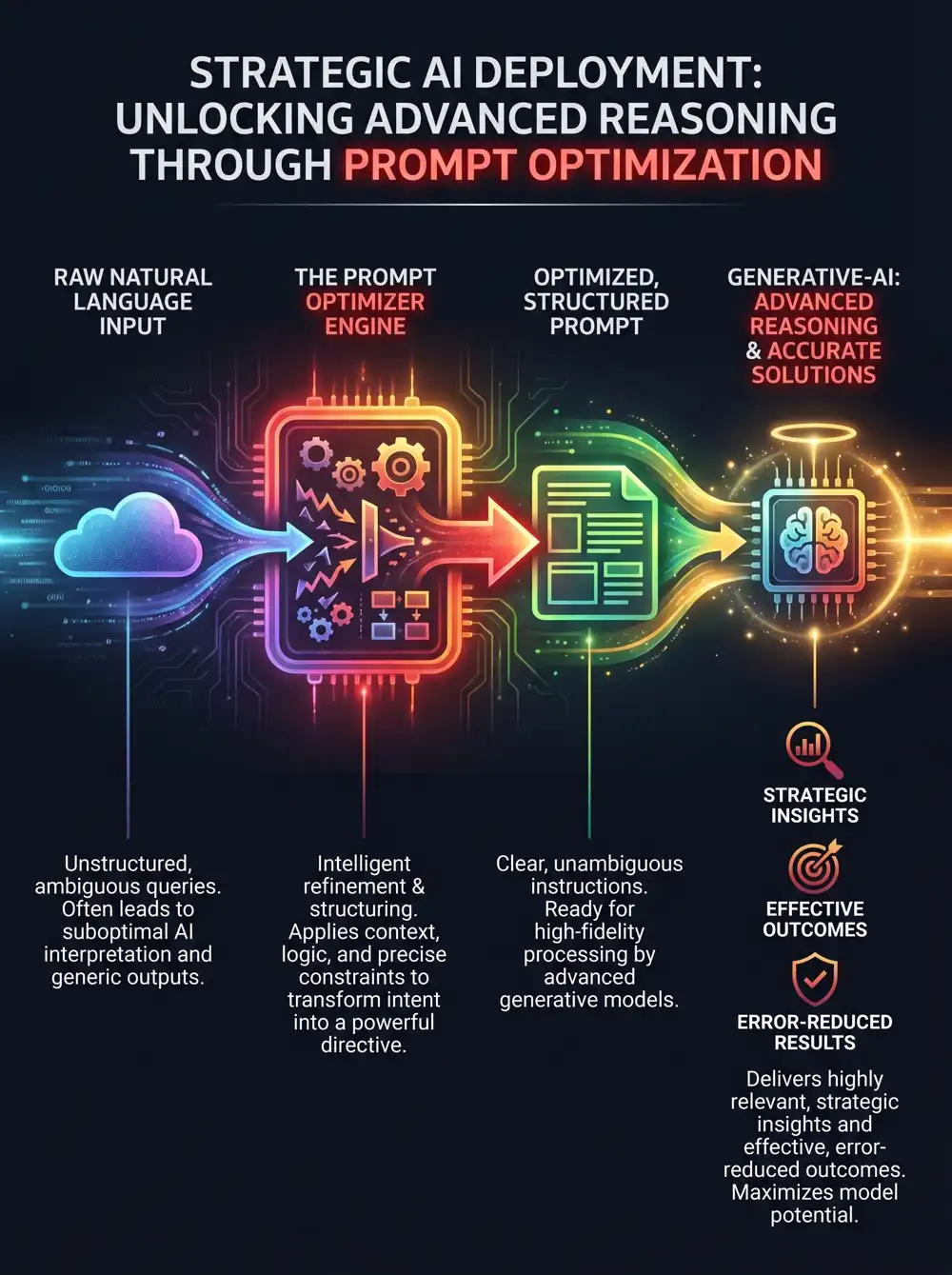

A prompt optimiser is an essential tool that acts as an intelligent translation layer between your intent and advanced large language models. It automatically converts everyday language into the precise, structured instructions that AI models require to function at their peak. This automated refinement is crucial for eliminating common human errors, such as vagueness or cognitive bias, which frequently lead to irrelevant answers or AI hallucinations, ensuring you get the best possible performance from services like Claude.

By standardizing the input, a prompt optimiser ensures the AI receives a technically superior prompt every time. This overcomes the natural-language bottleneck, delivering better reliability and output quality regardless of your personal expertise in prompt engineering.

The Power of Neutral Language for Advanced Reasoning

A key function of a superior prompt optimiser is its ability to rephrase your queries using neutral, objective language. This neutrality is vital because it helps avoid the classic "garbage in, garbage out" dilemma, promoting advanced reasoning. Instead of guiding an AI toward a preconceived conclusion, a neutral prompt encourages a model like Claude to analyze a problem on its merits, allowing it to engage in a more logical and deductive process.

How a Prompt Optimiser Mitigates Common Errors

By systematically refining user inputs, a prompt optimiser addresses predictable types of human error that degrade AI performance. To better understand this impact, especially for sophisticated models, we can categorize these improvements into structural, contextual, and logical optimizations. This structured approach ensures that prompts are crafted to deliver the most consistent and high-quality results.

1. Structural & Formatting Optimizations

Proper prompt structure and format are foundational for machine-readable outputs and overall clarity, which is a documented best practice for improving AI performance.

| Type of Human Error | Description of Error | Optimiser Solution |

|---|---|---|

| Ambiguity & Vagueness | The user provides a generic request like asking "Write a report" without defining its scope, length, or audience. | Context Injection: The optimiser automatically expands the prompt with critical parameters for length, tone, and audience, ensuring a comprehensive response from models like Claude. |

| Incorrect Syntax | The user needs data for a script or database but forgets to specify the required structure. | Schema Enforcement: The tool wraps the prompt in strict instructions using XML tags to output valid JSON or another machine-readable format, a technique proven to increase reliability. |

2. Contextual & Cognitive Optimizations

Providing the right context prevents the AI from making biased or uninformed assumptions, which is critical for improving natural language processing accuracy.

| Type of Human Error | Description of Error | Optimiser Solution |

|---|---|---|

| Cognitive Bias | The user inadvertently uses leading language that biases the AI toward a specific, potentially incorrect, answer. | Neutral Language Reframing: The optimiser rephrases the query to be objective and factual, encouraging data-driven answers rather than user-suggested ones. This is vital for conversational AIs. |

| Context Amnesia | The user forgets to include necessary background information, like from earlier in a workflow or from a large document. | Dynamic Retrieval: The system automatically retrieves and appends relevant documentation, providing an LLM like Claude with the full context it needs to leverage its large context window effectively. |

3. Logic & Reasoning Optimizations

Complex tasks require the AI to show its work to avoid calculation errors or logical fallacies, moving beyond simple zero-shot prompting for better results.

| Type of Human Error | Description of Error | Optimiser Solution |

|---|---|---|

| Lack of Step-by-Step Reasoning | The user asks for a complex conclusion without instructing the AI to break down the problem. | Chain-of-Thought (CoT) Injection: The optimiser inserts instructions for the AI to "think step-by-step," a method that unlocks the advanced reasoning capabilities of models like Anthropic's Claude. |

Ready to transform your AI into a genius, all for Free?

Create your prompt. Write it in your own voice and style.

Click the Prompt Rocket button.

Receive your Better Prompt in seconds.

Choose your favourite AI model, like Claude, and click to share.

| Role | Position | Unique Selling Point | Flexibility | Problem Solving | Saves Money | Solutions | Summary | Use Case |

|---|---|---|---|---|---|---|---|---|

| Coders | Developers | Unleash your 10x | No more hopping between agents | Reduce tech debt & hallucinations | Get it right 1st time, reduce token usage | Minimises scope creep and code bloat | Generate clear project requirements | Merge multiple ideas for Claude |

| Leaders | Professionals | Be good, Be better prompt | No vendor lock-in, works with any AI | Reduces excessive complementary language | Prompt more assertively and instructively | Improved data privacy, trust and safety | Summarise outline requirements | Prompt refinement and productivity boost |

| Higher Education | Students | Give your studies the edge | Use your favourite, or try a new AI chat | Improved accuracy and professionalism | Saves tokens, extends context, it’s FREE | Articulate maths & coding tasks easily | Simplify complex questions and ideas | Prompt smarter and retain your identity |