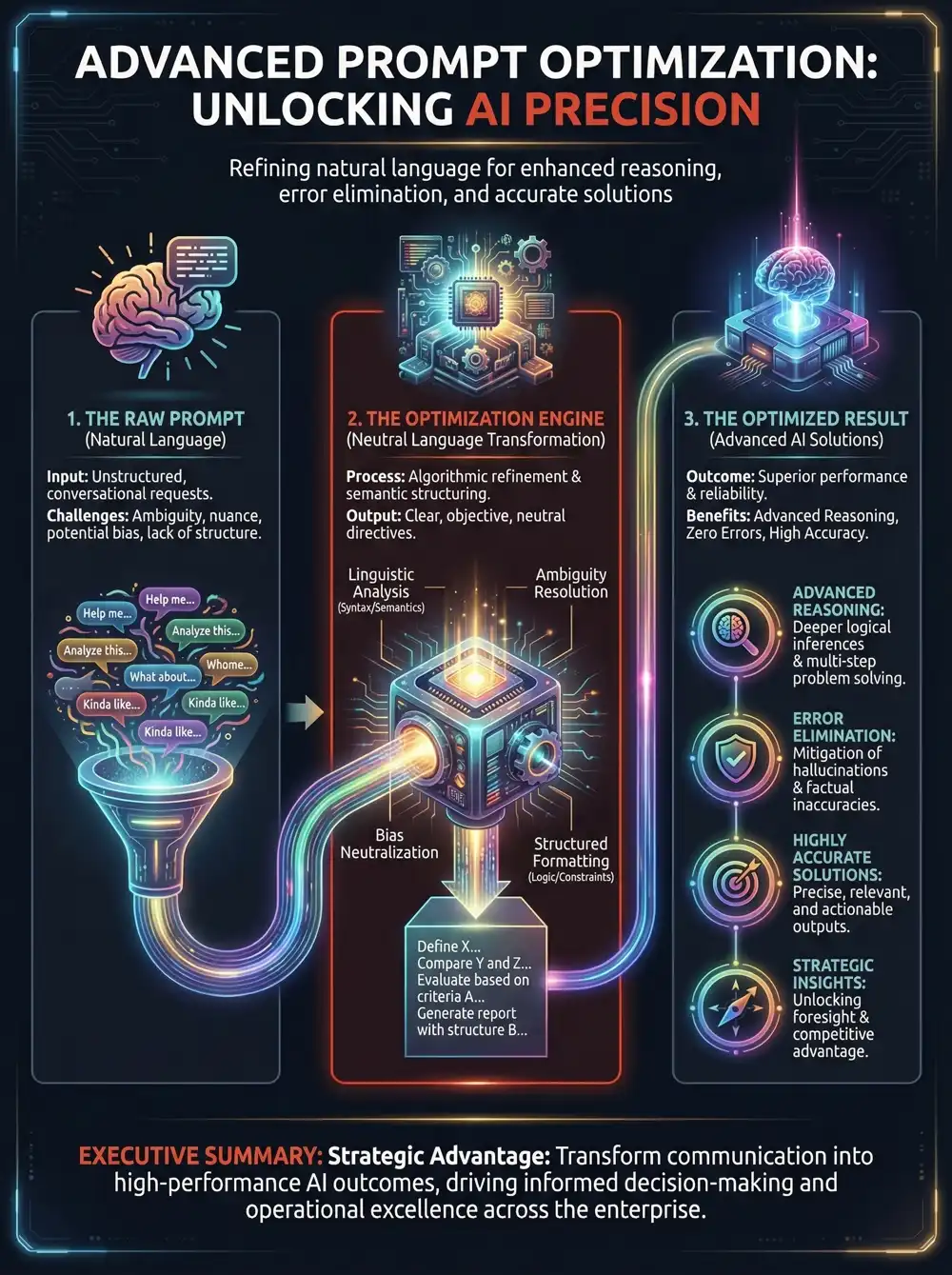

A prompt optimizer is a vital tool that serves as an intelligent translation layer between your intent and a large language model. It automatically refines your natural language into the precise, structured instructions that AI models need to perform at their best. This automated refinement is key to removing common human errors, such as vagueness or cognitive bias, which often lead to irrelevant answers or AI hallucinations. By standardizing your input, a prompt optimizer ensures that an AI like Claude receives a technically superior prompt every time, boosting the reliability of its output regardless of your prompt engineering skill level.

The Advantage of Neutral Language for Advanced Reasoning

A primary feature of a quality prompt optimizer is its capacity to rephrase your questions using neutral language. Neutral language is objective, factual, and devoid of emotional or leading terms that can unintentionally bias an AI's response. This neutrality is crucial for avoiding the "garbage in, garbage out" problem, fostering advanced reasoning and effective problem-solving. Instead of steering the AI toward a predetermined answer, a neutral prompt encourages the model to analyze a problem based on its merits. This change from subjective inquiry to objective analysis enables the AI, including models from Anthropic, to use a more logical and deductive process, yielding more precise solutions.

How a Prompt Optimizer Corrects Common Errors

By systematically enhancing user inputs, a prompt optimizer tackles predictable human errors that can reduce AI performance. To better grasp this effect, we can divide these improvements into structural, contextual, and logical optimizations. This methodical approach, especially beneficial for complex tasks assigned to an AI such as Claude, ensures that prompts are designed to produce the most consistent and high-quality outcomes.

1. Structural & Formatting Optimizations

Correct prompt structure and format are essential for machine-readable outputs that AI can easily interpret.

| Type of Human Error | Description of Error | Optimizer Solution |

|---|---|---|

| Ambiguity & Vagueness | The user gives a general request without specifying its scope, length, or intended audience, which reduces prompt clarity. | Context Injection: The optimizer automatically enhances the prompt to include key parameters for length, tone, and target audience, ensuring a thorough response from models like Claude. |

| Incorrect Syntax | The user requires data for a script or database but fails to specify the necessary structure, such as XML or JSON. | Schema Enforcement: The tool encloses the prompt in strict instructions to produce valid JSON, XML, or another machine-readable format. |

2. Contextual & Cognitive Optimizations

Supplying the right background context in a prompt prevents the AI from making biased or uninformed assumptions.

| Type of Human Error | Description of Error | Optimizer Solution |

|---|---|---|

| Cognitive Bias | The user unintentionally employs leading language that steers the AI toward a particular, and possibly wrong, answer. | Neutral Language Reframing: The optimizer rephrases the query to be objective and factual, promoting data-driven answers instead of user-influenced ones, a technique that is highly effective with Claude. |

| Context Amnesia | The user neglects to include essential background information or constraints from earlier in a conversation or workflow. | Dynamic Retrieval: The system automatically finds and adds relevant documentation, giving the LLM the complete context it requires to respond accurately. |

3. Logic & Reasoning Optimizations

Complex tasks demand that the AI demonstrates its reasoning to prevent calculation mistakes or logical errors.

| Type of Human Error | Description of Error | Optimizer Solution |

|---|---|---|

| Lack of Step-by-Step Reasoning | The user requests a complex answer without directing the AI to break down the problem into smaller steps. | Chain-of-Thought (CoT) Injection: The optimizer adds instructions for the AI to "think step-by-step," compelling a model like Claude to verify its logical sequence before providing a final answer. |

Ready to transform your AI into a genius, all for Free?

Create your prompt. Write it in your own voice and style.

Click the Prompt Rocket button.

Receive your Better Prompt in seconds.

Choose your favorite AI model, like Claude, and click to share.

| Role | Position | Unique Selling Point | Flexibility | Problem Solving | Saves Money | Solutions | Summary | Use Case |

|---|---|---|---|---|---|---|---|---|

| Coders | Developers | Unleash your 10x | No more hopping between different AI agents | Reduce tech debt & hallucinations with Claude | Get it right the first time, reduce token usage | Minimizes scope creep and code bloat | Generate clear project requirements | Merge multiple ideas and prompts |

| Leaders | Professionals | Be good, Be better prompt | No vendor lock-in, works with any AI | Reduces excessive complementary language | Prompt more assertively and instructively | Improved data privacy, trust, and safety | Summarize outline requirements for Claude | Prompt refinement and productivity boost |

| Higher Education | Students | Give your studies the edge | Use your favourite, or try a new AI chat | Improved accuracy and professionalism | Saves tokens, extends context, it’s FREE | Articulate maths & coding tasks for Claude | Simplify complex questions and ideas | Prompt smarter and retain your identity |